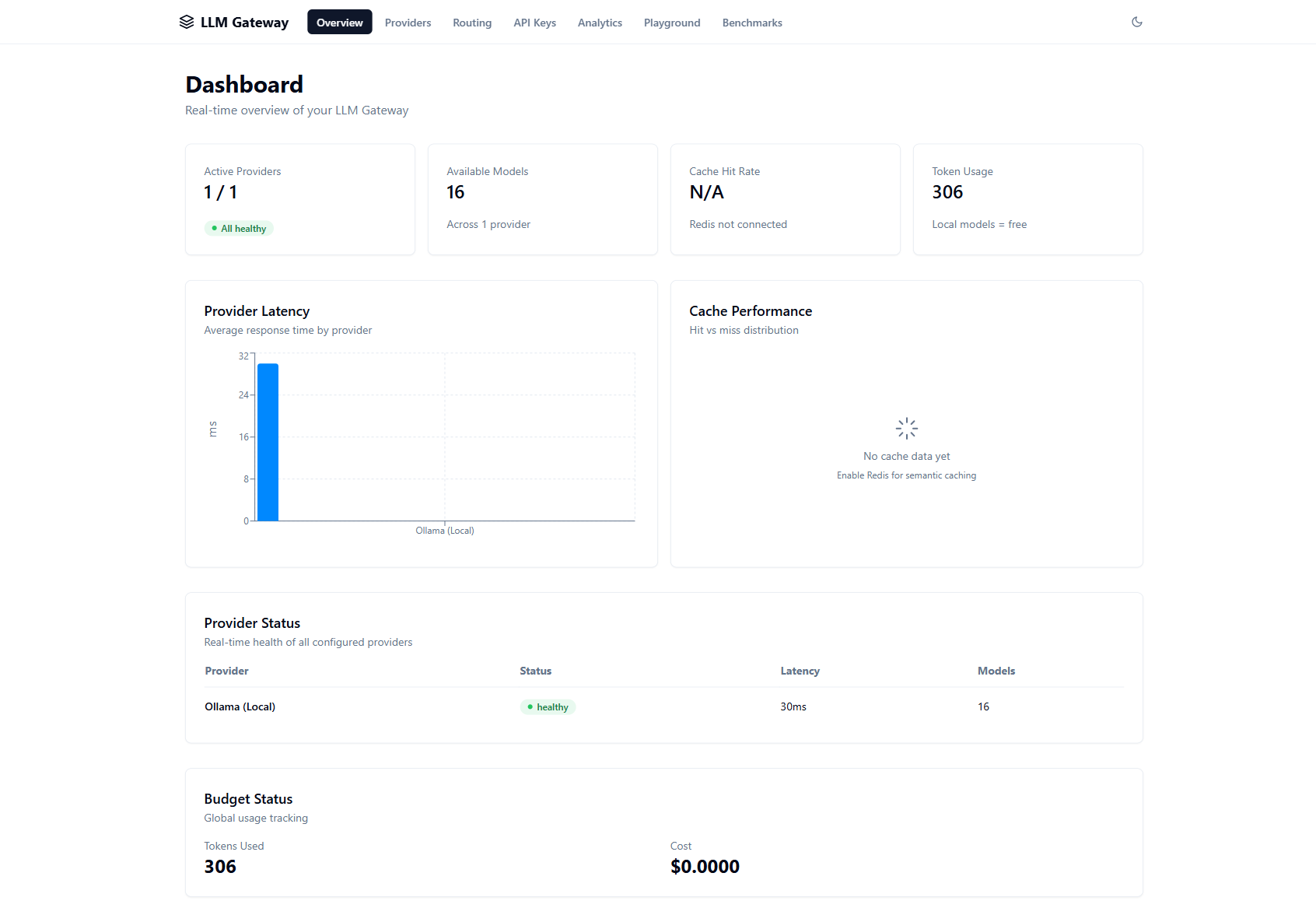

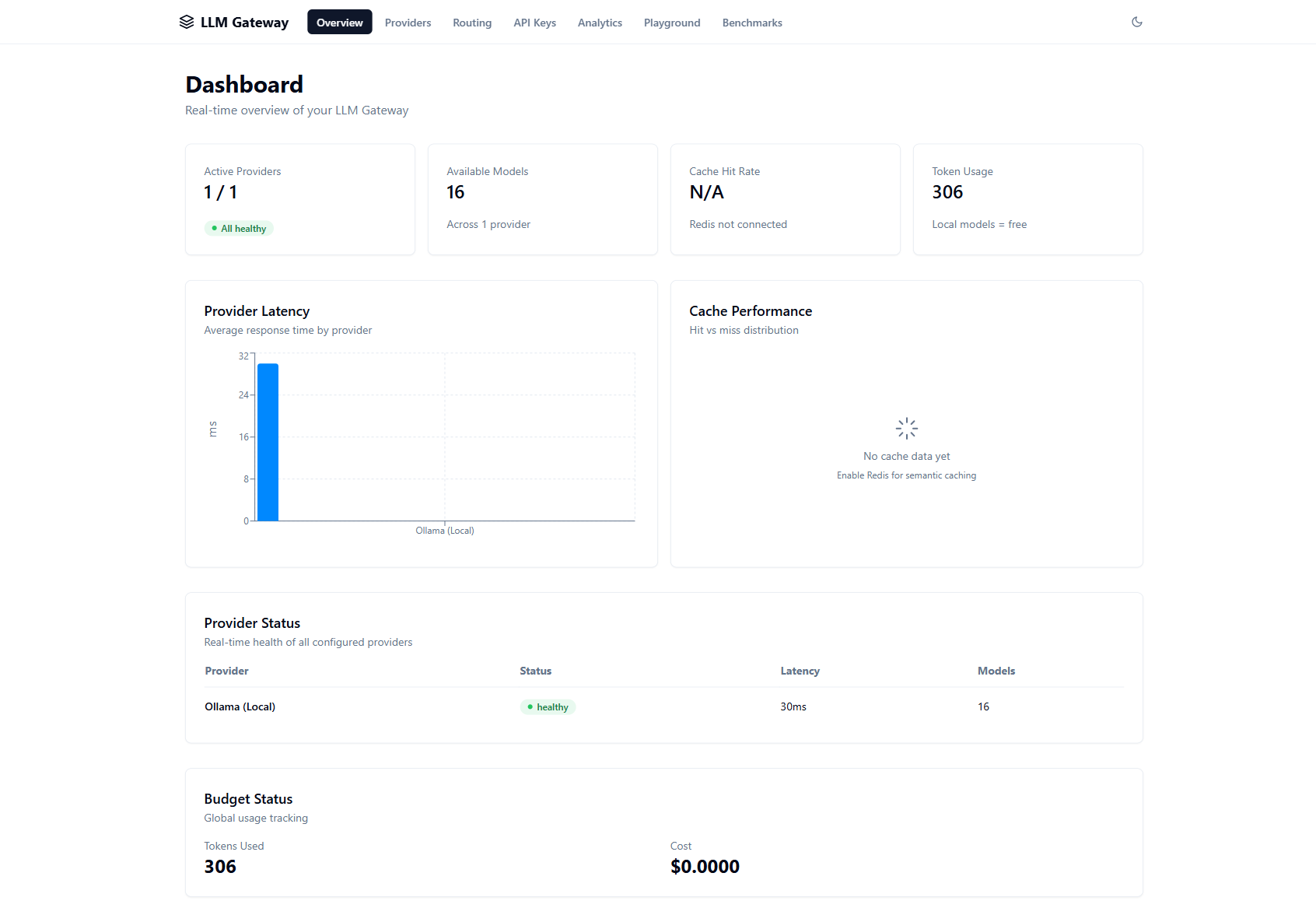

Full-featured admin dashboard with real-time monitoring, routing configuration, and interactive playground.

Overview — Provider health, latency charts, token usage, budget tracking

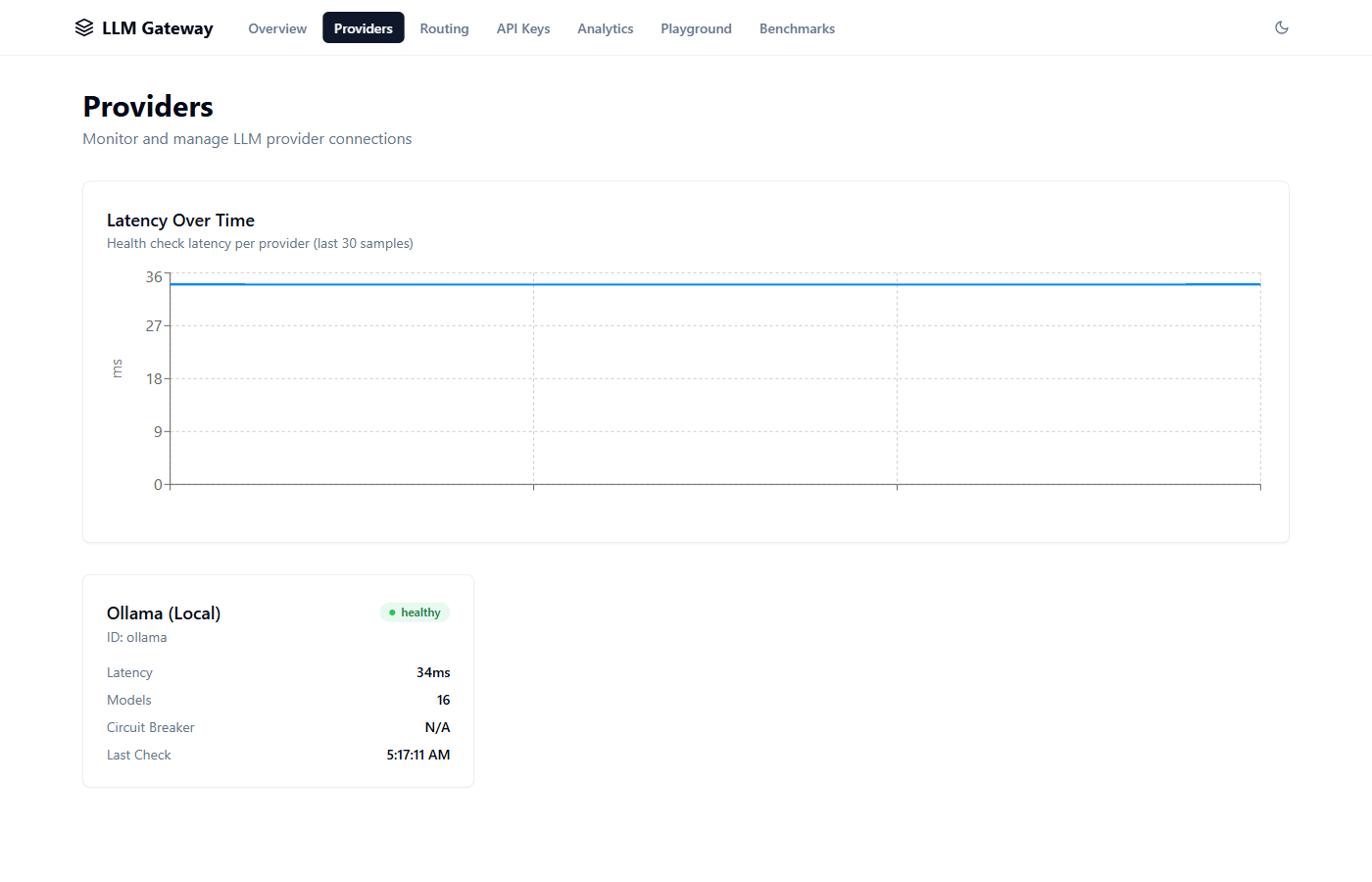

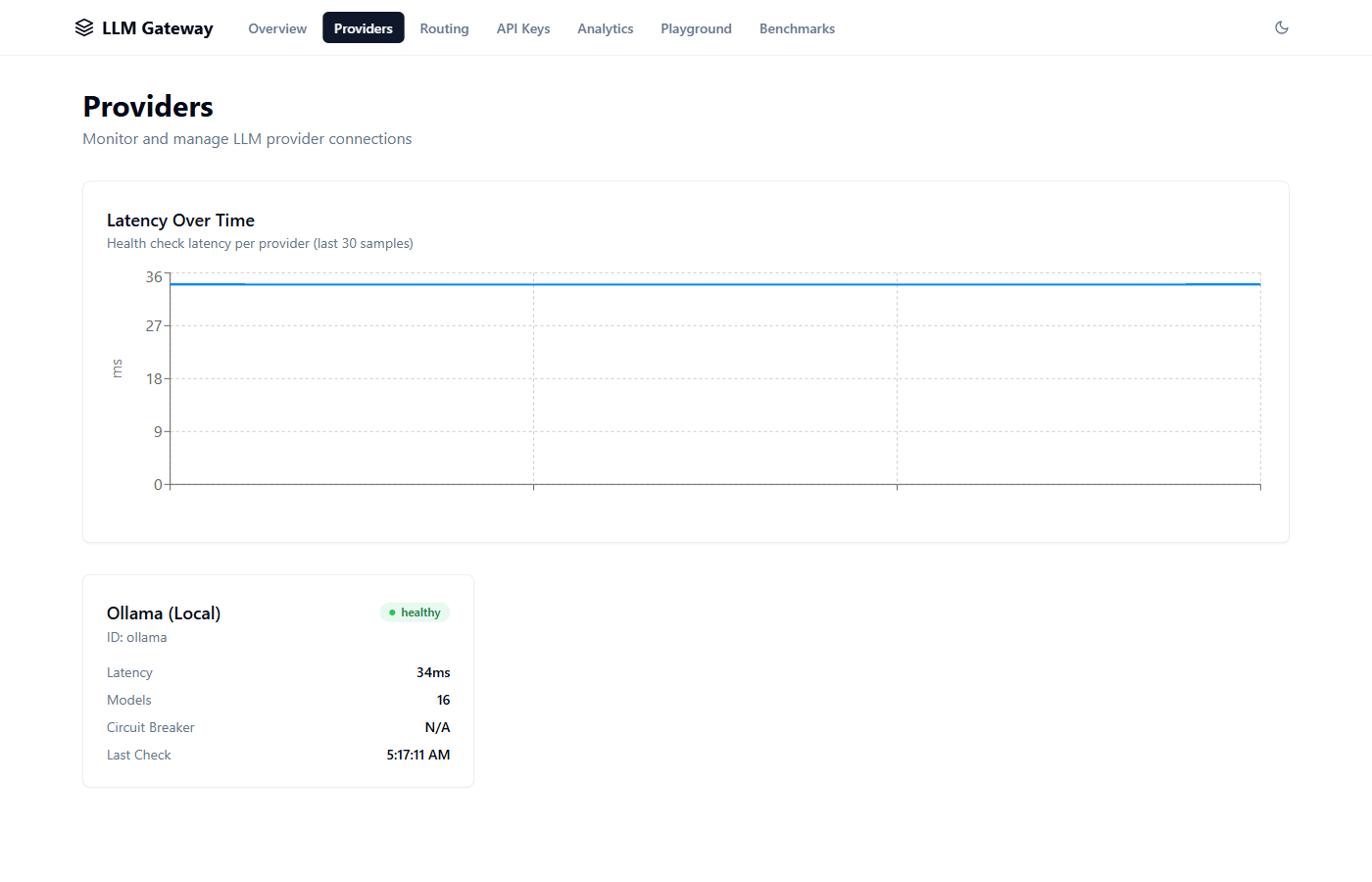

Providers — Real-time latency monitoring and health status

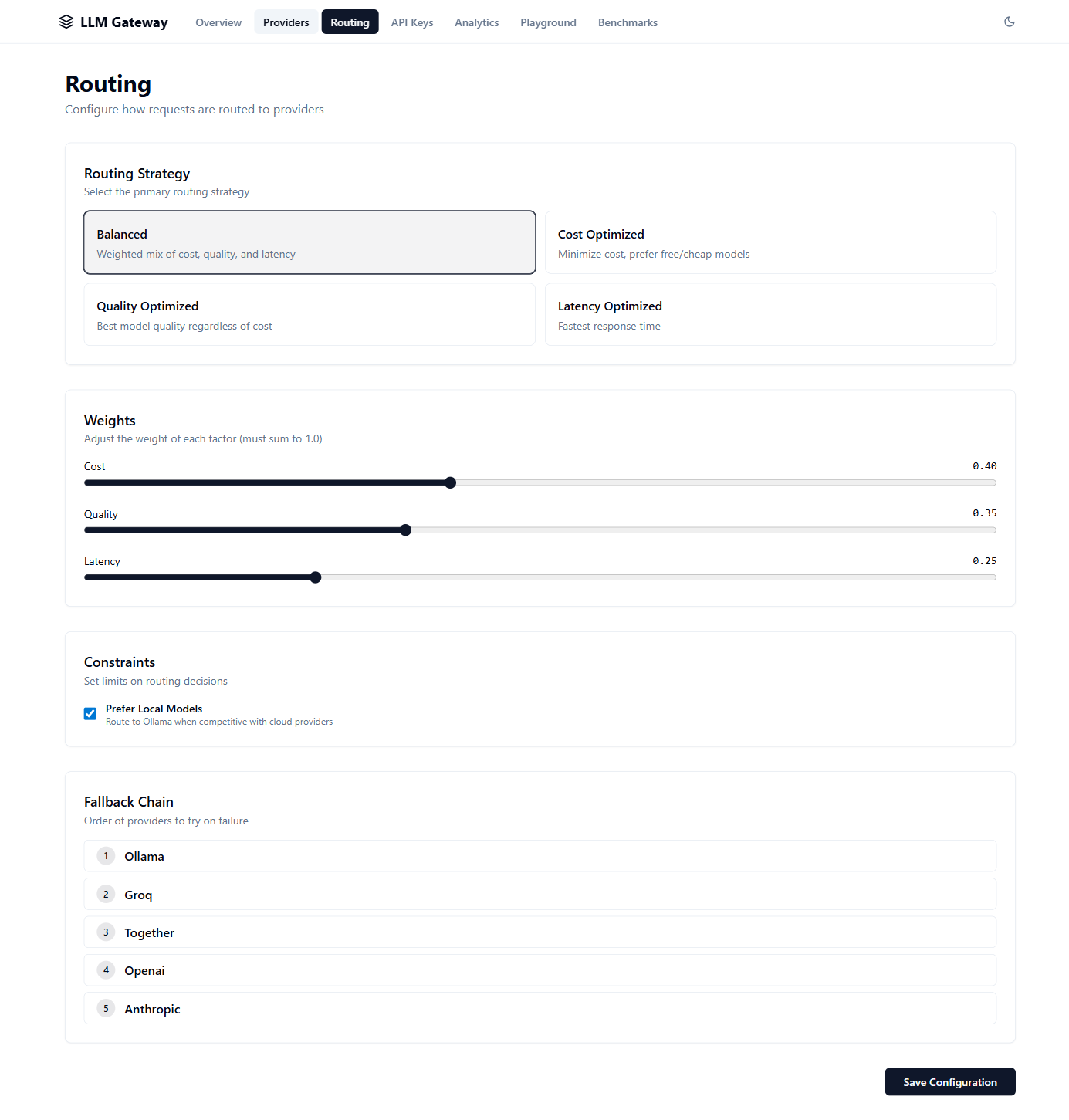

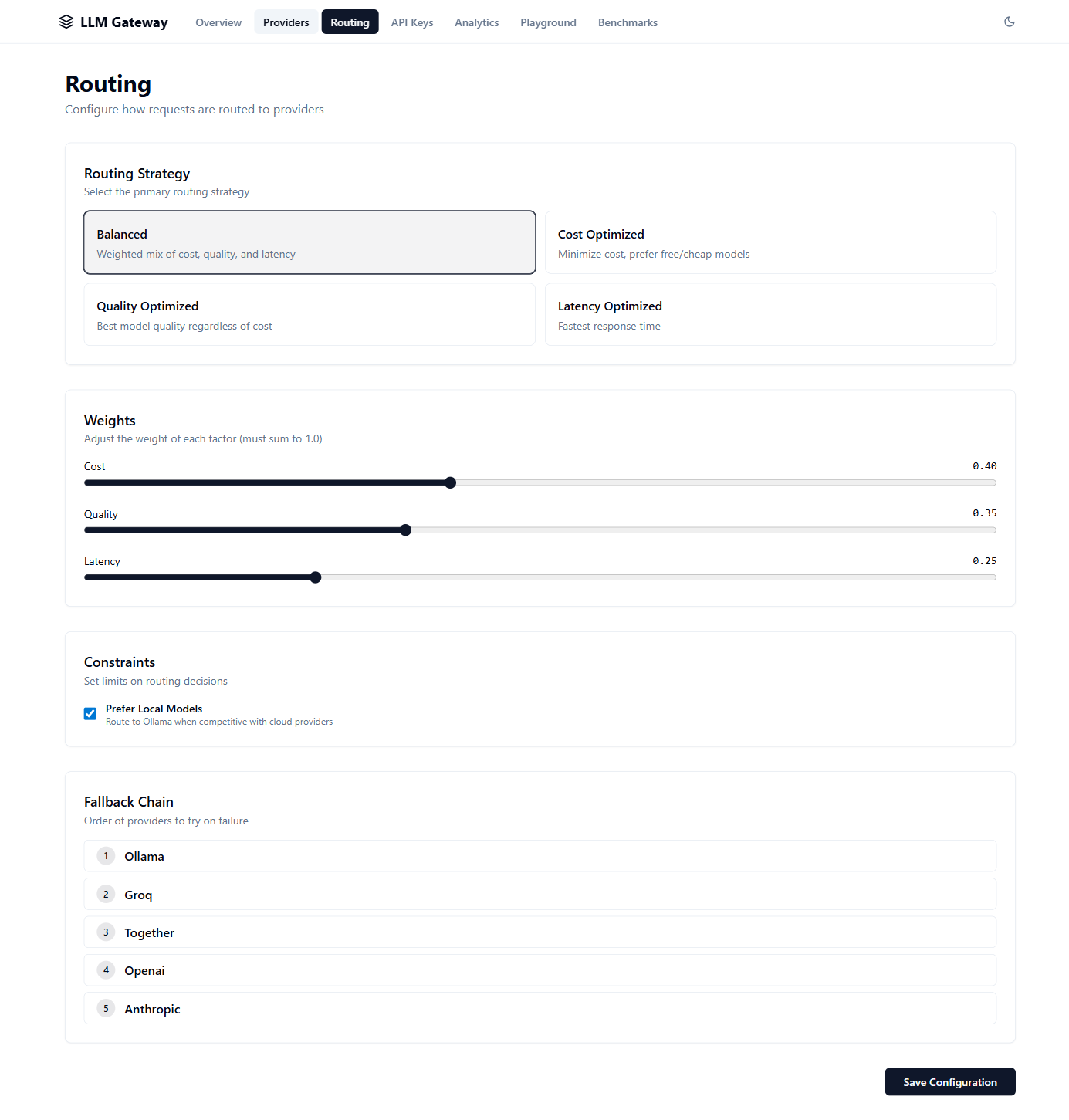

Routing — Strategy selector, weight sliders, fallback chain config

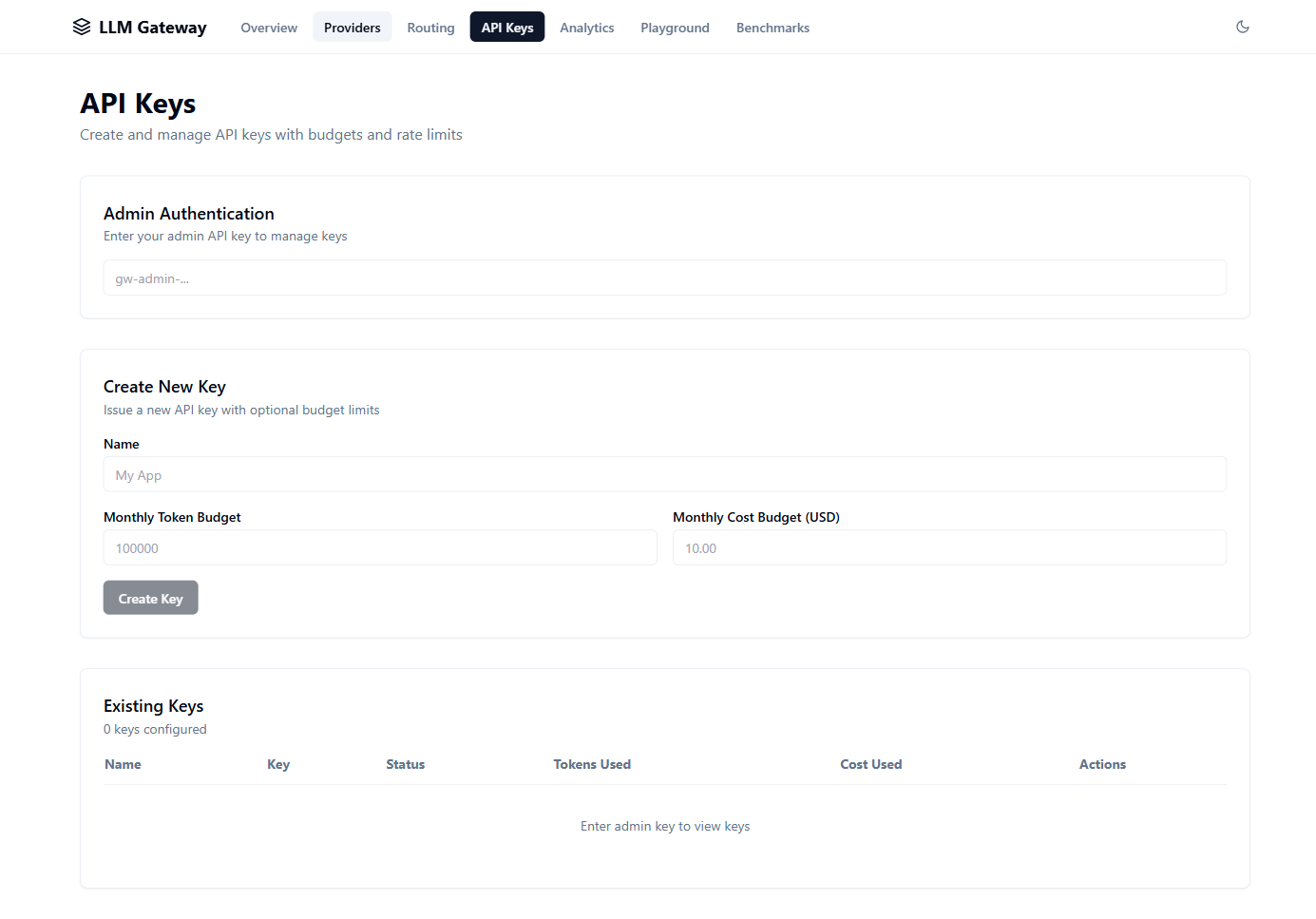

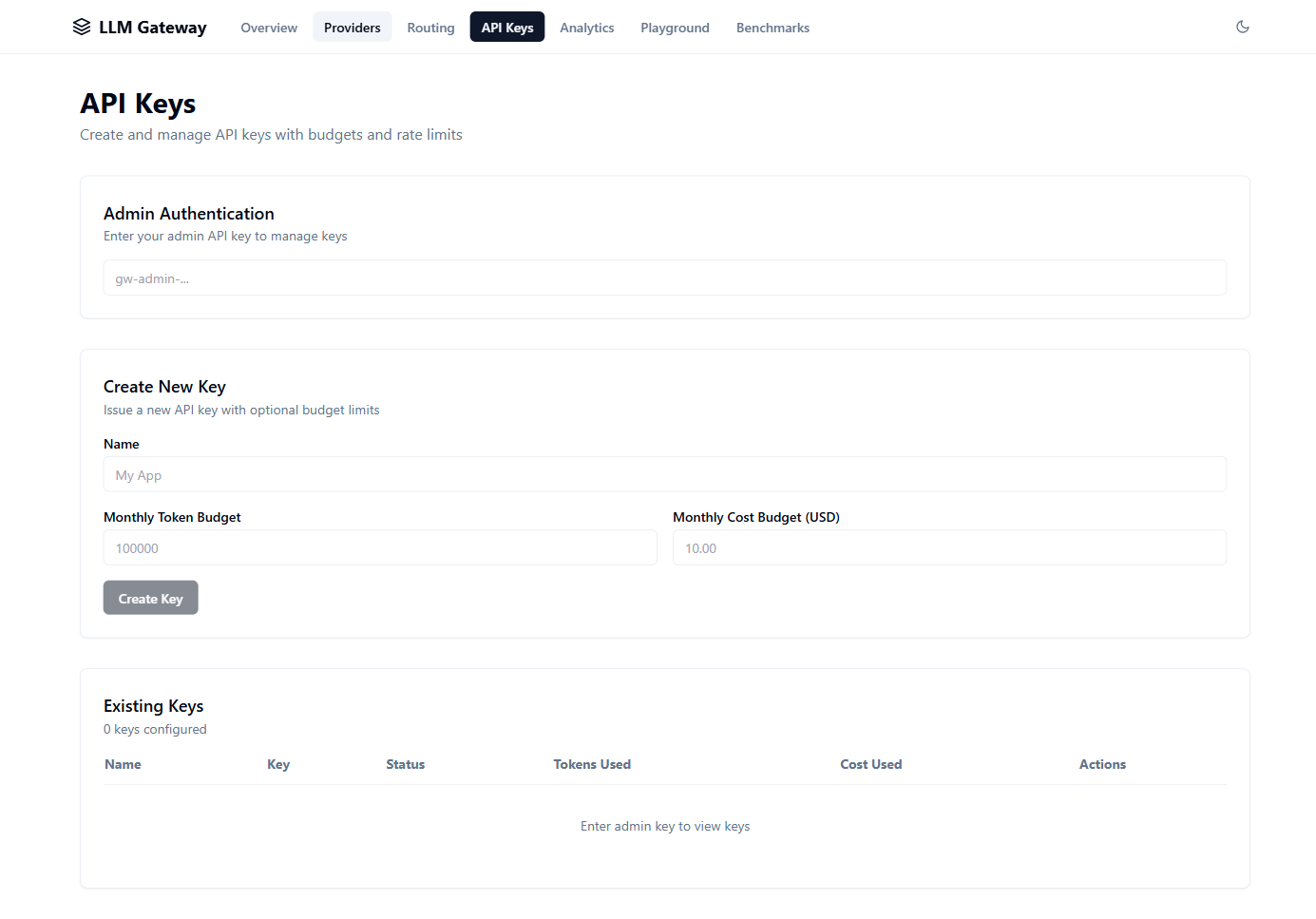

API Keys — Key management with per-key budget limits

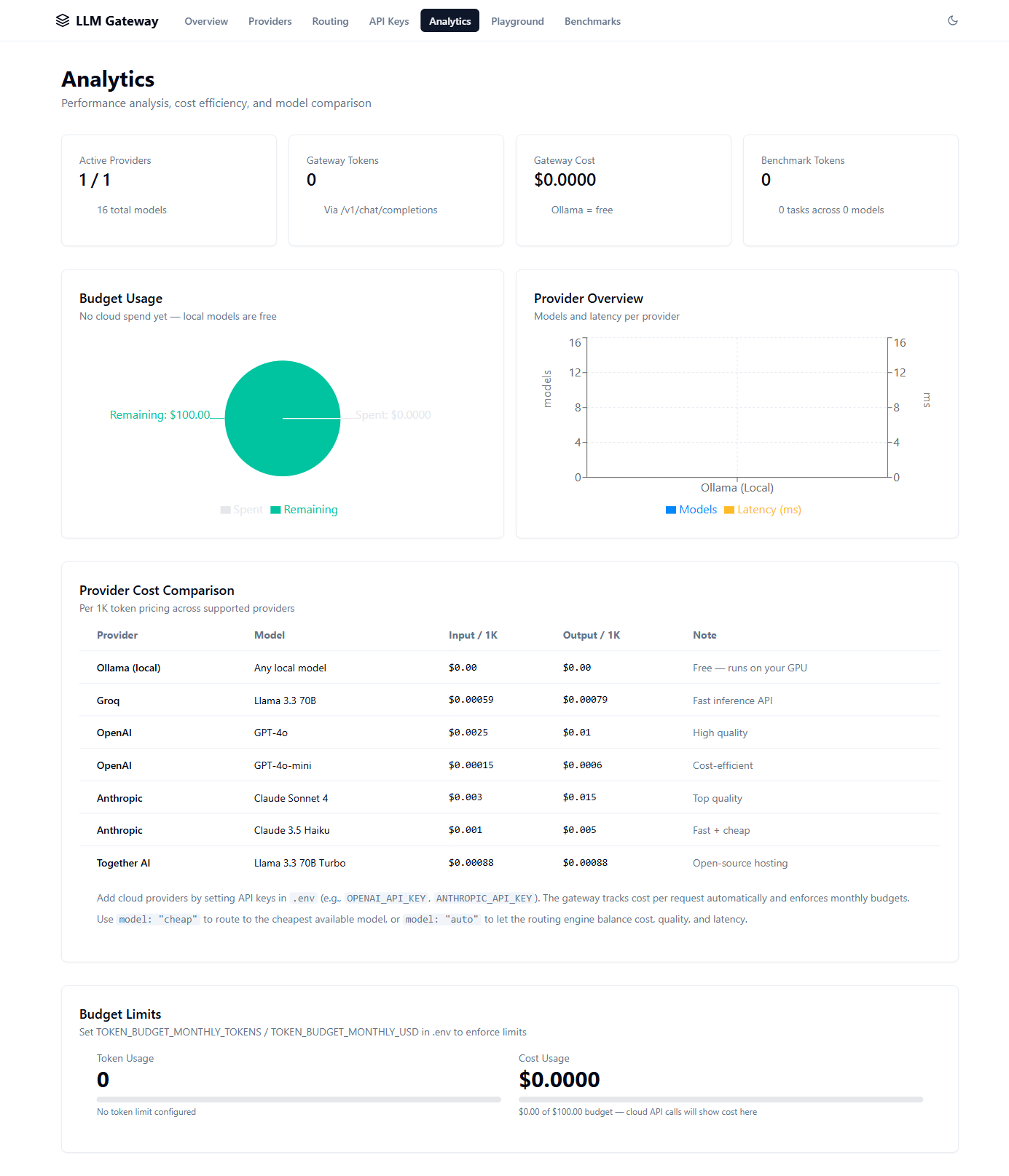

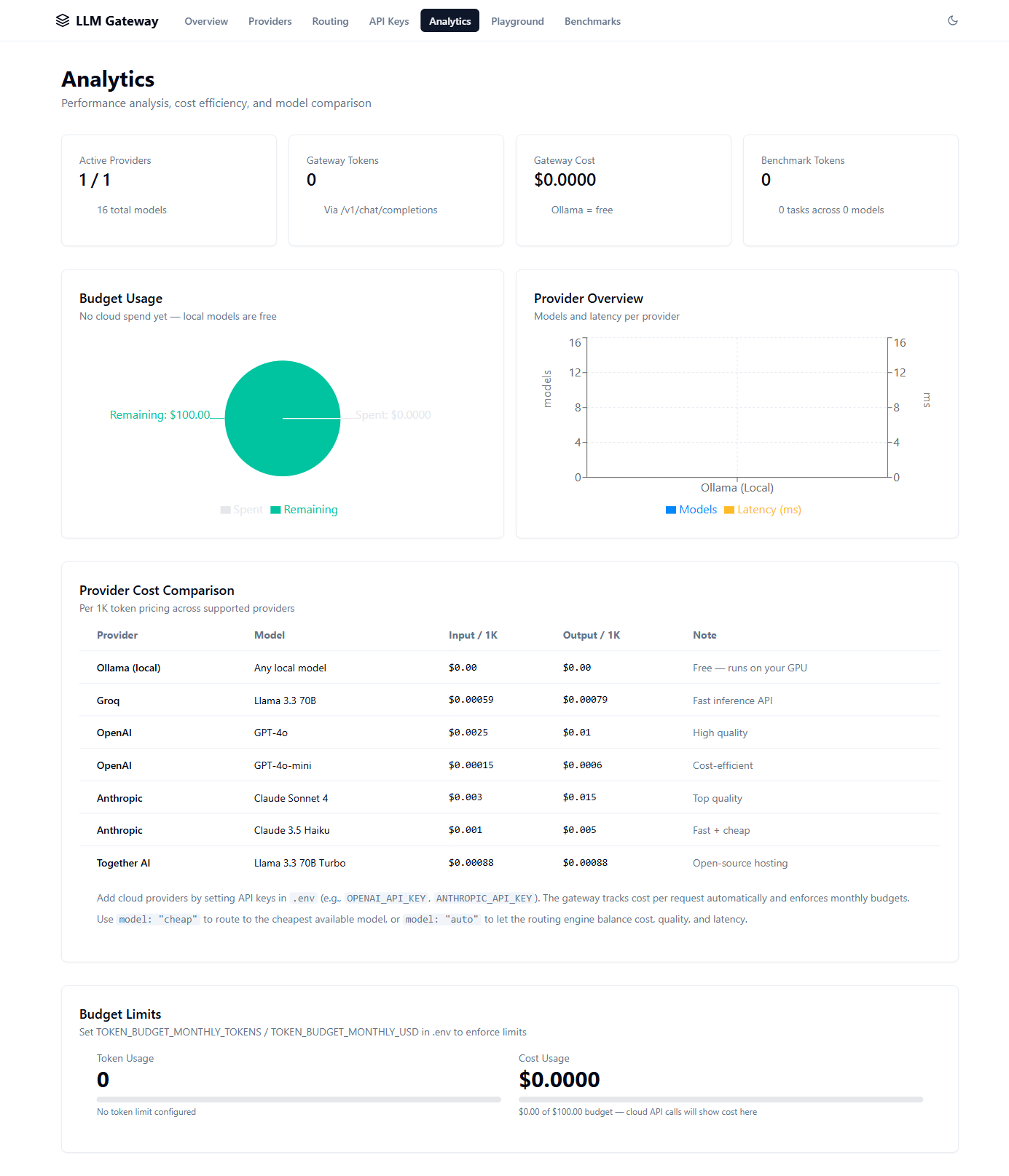

Analytics — Cost breakdown, provider usage, token consumption

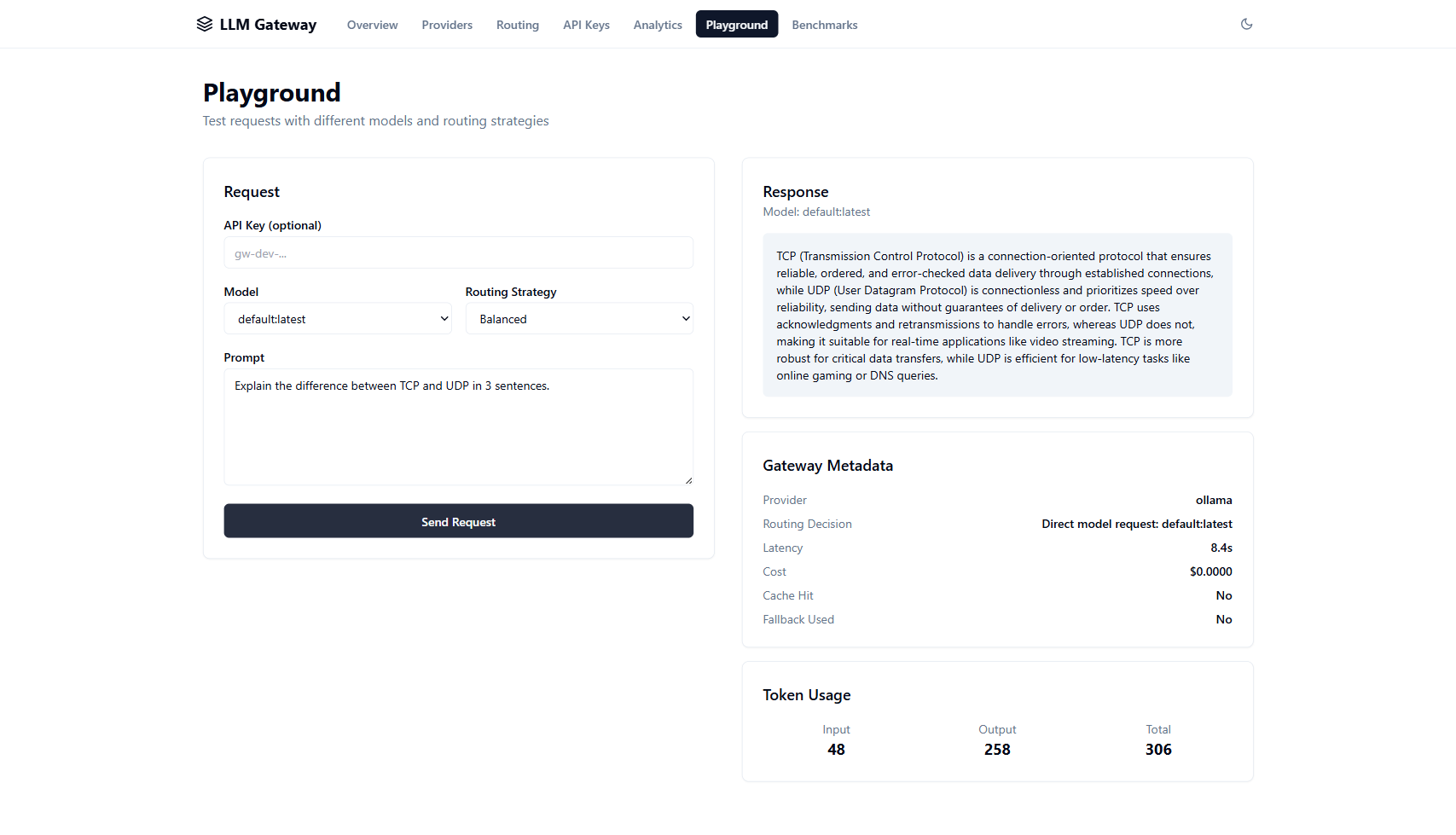

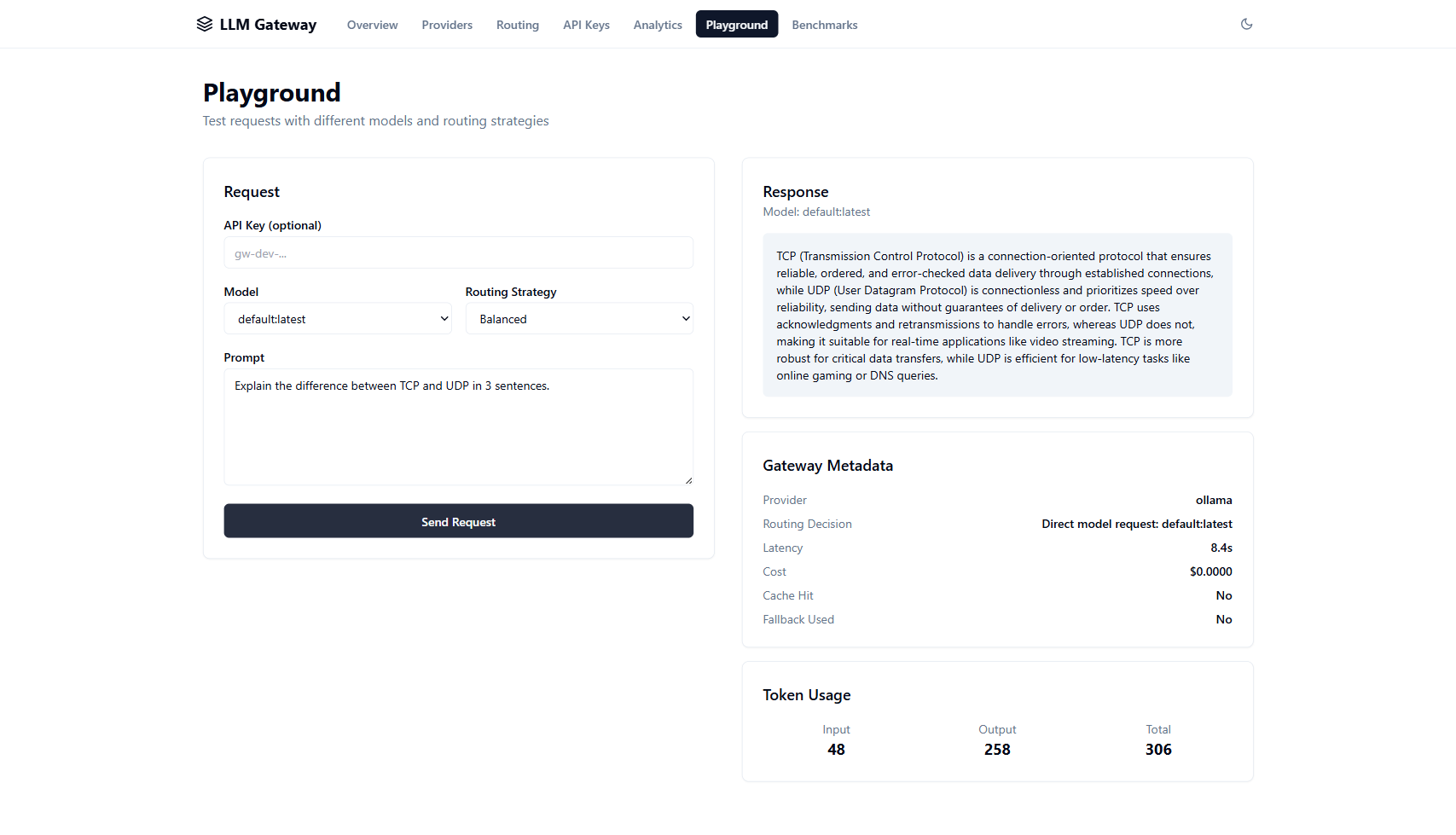

Playground — Interactive prompt testing with routing metadata

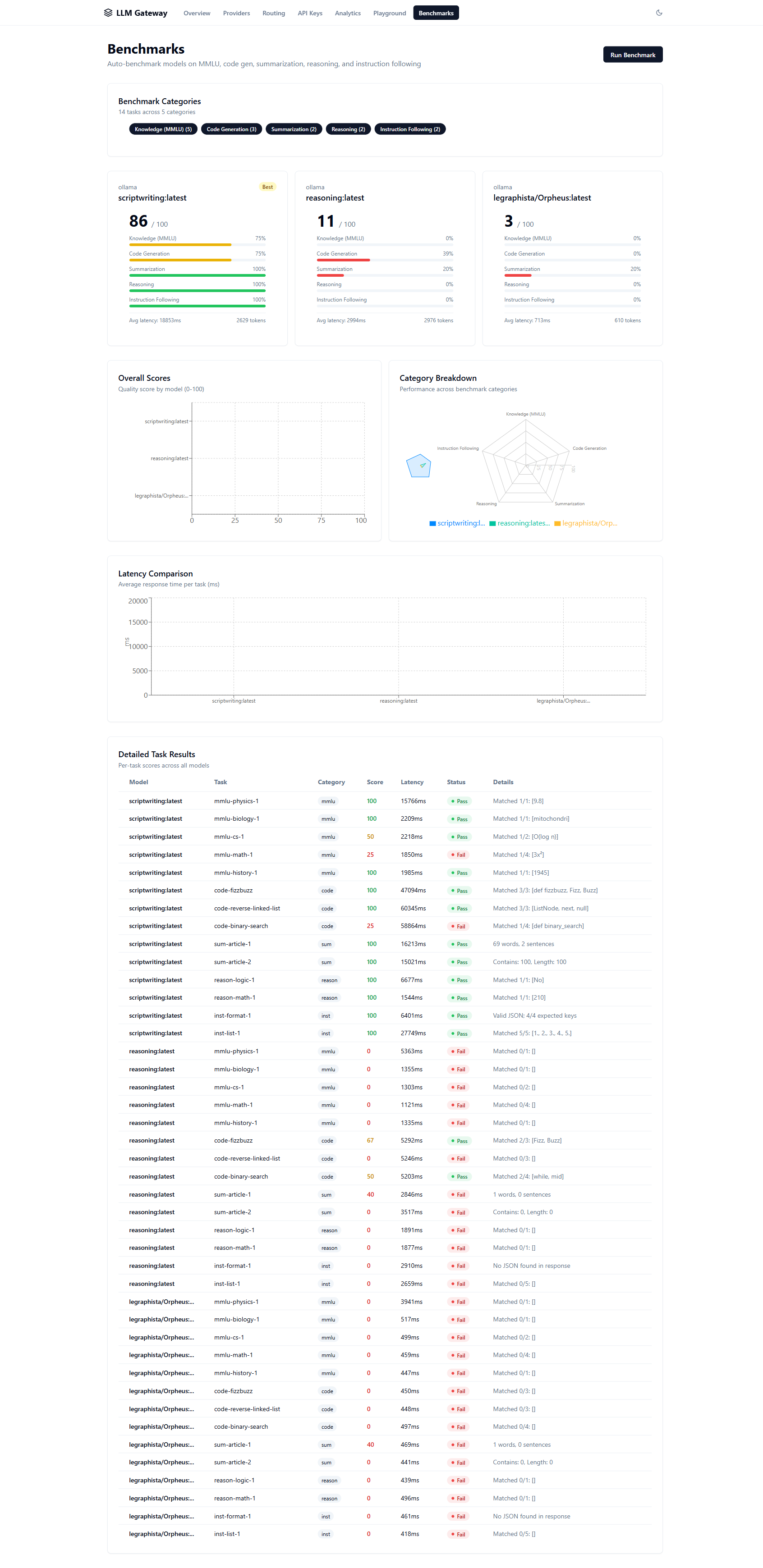

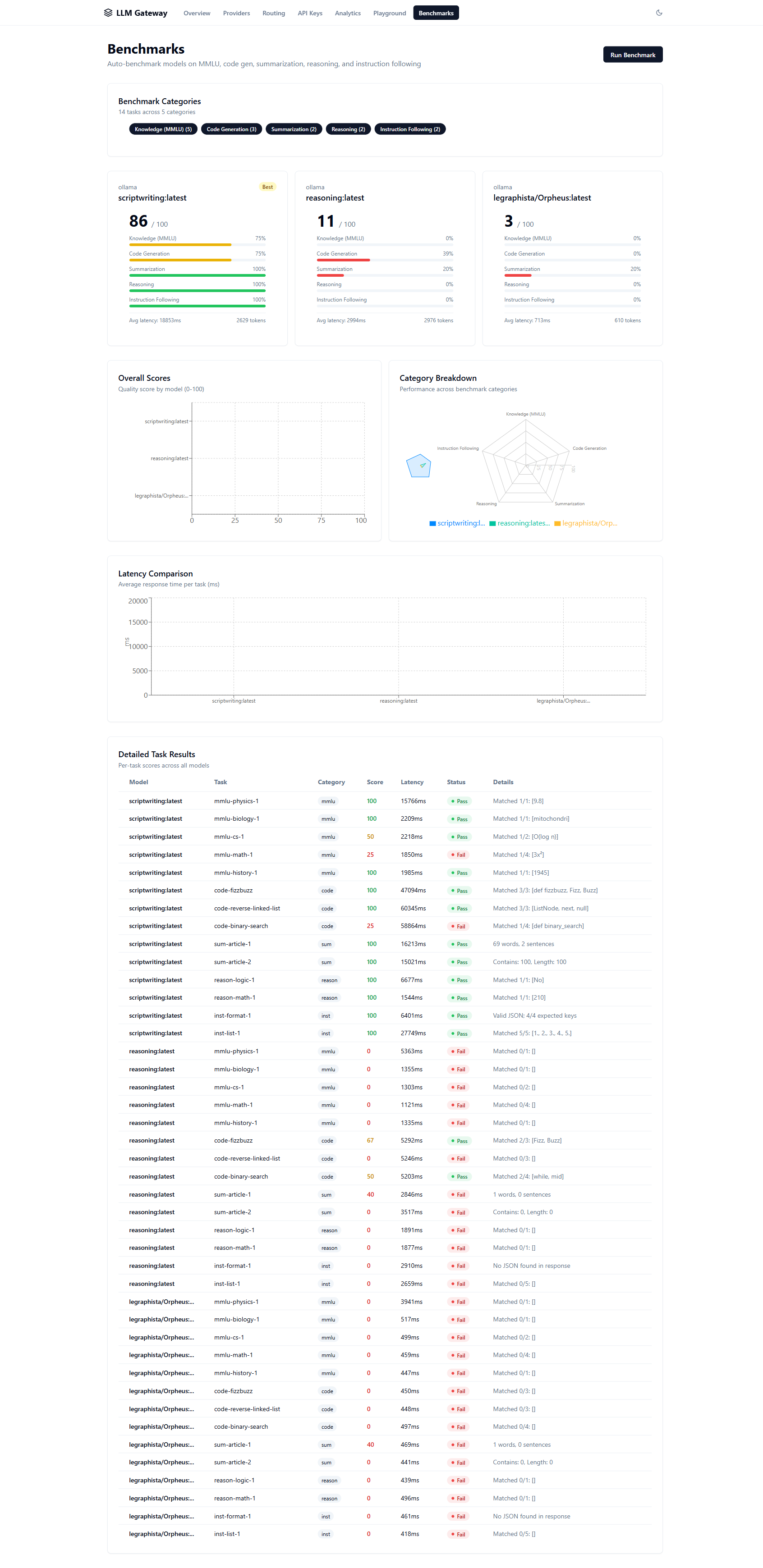

Benchmarks — Model comparison with scorecards, radar charts, and detailed results